a new year in a new home on a new infra stack

same challenges, same pastimes, same me?

here’s to 2026 – thanks for sticking around

Welcome, weary traveller.

a new year in a new home on a new infra stack

same challenges, same pastimes, same me?

here’s to 2026 – thanks for sticking around

A couple weeks ago, I presented an internal workshop at PointClickCare as part of a Learnathon – 2 weeks of buffet-style workshops delivered by staff and vendors followed by a 1 week project period akin to a Hackathon.

In this session, we covered theory around Kubernetes pod life cycles, along with operator considerations. In the remaining time, we chatted about probes, readiness gates and container life cycle hooks.

Overall it was a great experience. I always find it tricky presenting to a mixed audience (in this case, a mix of both infrastructure engineers and SREs) but at the end of the day the content was relatively digestible and will be a part of our formal learning pathways moving forward.

For the next one, I’ll see if I can add more cross-interactive components so workshop participants can get an opportunity to work together rather than a silo in their lab environment.

There was a practical lab portion to this published internally, but the theory I’ve sanitized and releasing here publicly.

Give it a read and learn something new – I know I did 🙂

At the time of writing, there are several issues (#1124, #1145, #1173, #1256, #1491) opened into Atmosphere-NX/Atmosphere regarding the following error:

A fatal error occurred when running atmosphere

Title ID: 0100000000000002b

Error Desc: std: abort () (called 0xffe)I encountered it out of nowhere on SW 15.0.1|AMS 1.4.0, but have heard of others facing it as a result of a bad microSD (already tested mine), or as the result of uncleanly shutting down Atmosphere.

Besides restarting from scratch with setting up a new emuNAND, I’ve also heard of success by restoring from an entire backup emuNAND.

Both of these are too nuclear for my tastes – let’s look into how we can recover an existing Atmosphere emuNAND by partially recovering from an eMMC (sysNAND) backup.

Besides a properly backed-up eMMC as documented almost everywhere when first homebrewing the Nintendo Switch (e.g. NH Switch Guide), you’ll also need:

Should note that the files we’ll be recovering from eMMC don’t seem to change much across Switch versions – in recovering my switch, I ended using an eMMC backup made on SW 9.0.1.

The process boils down to a few key steps:

SYSTEM partition in read-only/saves/8000000000000050

/saves/8000000000000052SYSTEM partition in read/write

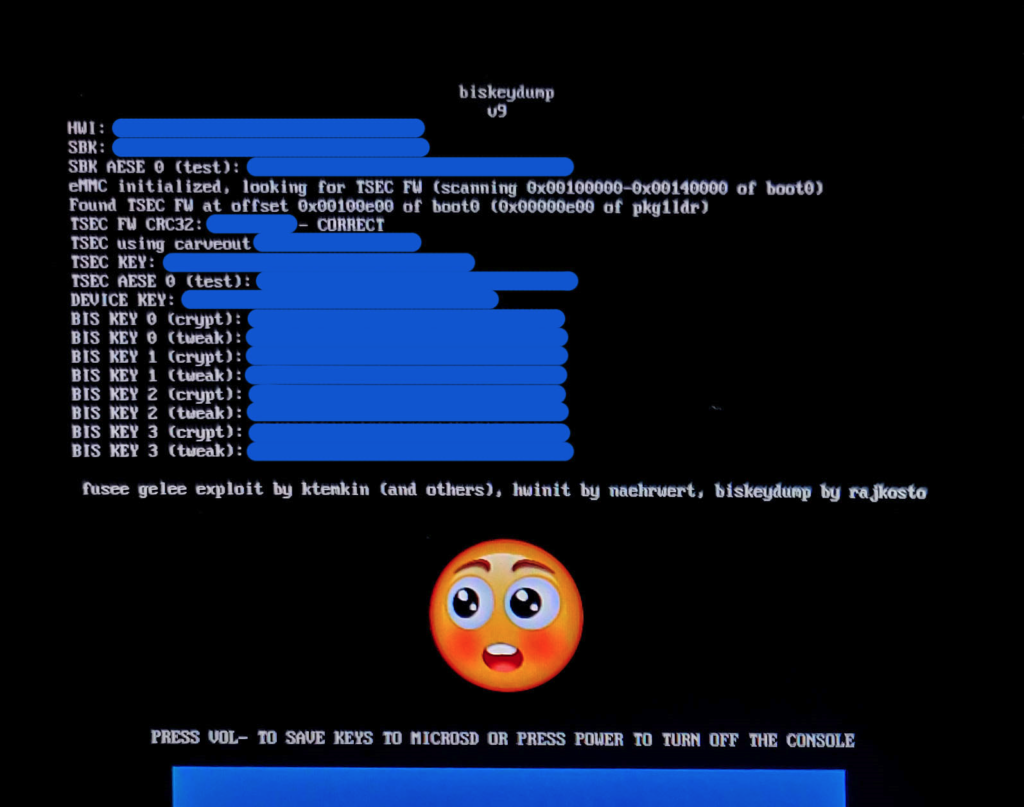

Using Hekate, launch biskeydump payload. You can also directly inject it via TegraRcm like any other binary.

Hit VOL- key to save keys as a text file to root of microSD card (/device.keys)

Shut off the console and get the microSD plugged into your Windows host.

Using NxNandManager, configure keyset by importing exported BIS keys from Switch microSD card (/device.keys).

Load backup eMMC and mount SYSTEM as Read-Only – we don’t want to modify our backup.

If this is the first time doing so, you might be prompted to install the Dokan dokan1 DiskDrive driver. Next through the Windows Device Driver Installation Wizard to do so.

From whatever mount path SYSTEM is at, copy the following files to some local folder:

SYSTEM (NxNandManager)/save/8000000000000050SYSTEM (NxNandManager)/save/8000000000000052Return back to NxNandManager and Unmount system.

Mount our switch microSD card and load emuNAND from device. Ensure that when mounting SYSTEM that Read-Only is unchecked.

Copy the following files to some local folder on your Windows host – they’re most likely bad files, but we don’t want to delete them in the event that recovering from eMMC makes things worse and we end up needing to recover from them.

SYSTEM (NxNandManager)/save/80000000000000d1SYSTEM (NxNandManager)/save/8000000000000050SYSTEM (NxNandManager)/save/8000000000000052Delete the following file from emuNAND:

SYSTEM (NxNandManager)/save/80000000000000d1Copy the files restored in step #2 to your emuNAND:

SYSTEM (NxNandManager)/save/8000000000000050SYSTEM (NxNandManager)/save/8000000000000052Unmount, close device (similar process at the end of Step #2), and unmount your microSD from Windows.

At this point, you can insert your microSD back into the switch console, boot into RCM, and inject your favourite payload to launch Atmosphere.

The concept of mounting + partially recovering from eMMC seems to be a sacrilegious topic in the switch homebrew community. I’m hoping that this guide not only helps you fix the ‘A fatal error occured when running Atmosphere’ Title ID: 010000000000002b panic, but also serves as a beacon to fixing similar issues where the solution involves recovering specific files from eMMC.

There’s many ways to build a circular economy – at Repair Cafe Toronto we do it one fix at a time. Thanks Impact Zero for capturing this journey as I speak to this sustainable art in its prime.

Welcome to Sustainability Disruptors, the podcast presented by Impact Zero! Our goal is to have conversations with remarkable individuals who are positively impacting the world and to motivate you to get involved in the sustainability movement, regardless of how small your actions may be. We are delighted to have you as a listener, and we invite you to join our community at impactzero.ca to support our podcast. Let us collaborate to make the world a better and more sustainable place for everyone!

This week’s episode features Alvin Ramoutar, a software developer who leads Repair Cafe Toronto in his free time. Alvin initially joined the Repair Cafe in 2018, but he has since become a crucial part of the core team, supporting their mission to organize free repair and community-building events across various regions in the Greater Toronto Area (GTA). We share many common values with Repair Cafe, and one of the highlights of this episode is Alvin’s favorite repair story, which he shares towards the end. It’s an engaging and informative conversation that you won’t want to miss.

This season of Sustainability Disruptors is brought to you by Reverse Logistics Group (RLG), which provides recycling and circular economy solutions to meet your compliance needs. Contact them at canada@rev-log.com or visit their website at www.rev-log.com.

As part of a request by elderly family, here’s a simple 8″x2″x1″ two-part insertion container for tooth brushes and other teeth care tools. Specifically designed to hold denture brushes.

Some slight sanding word required at the end, depending on how tight you want it to be.

STL (for 3d printing)

IPT (Autodesk Inventor part file)

STL (for 3d printing)

IPT (Autodesk Inventor part file)

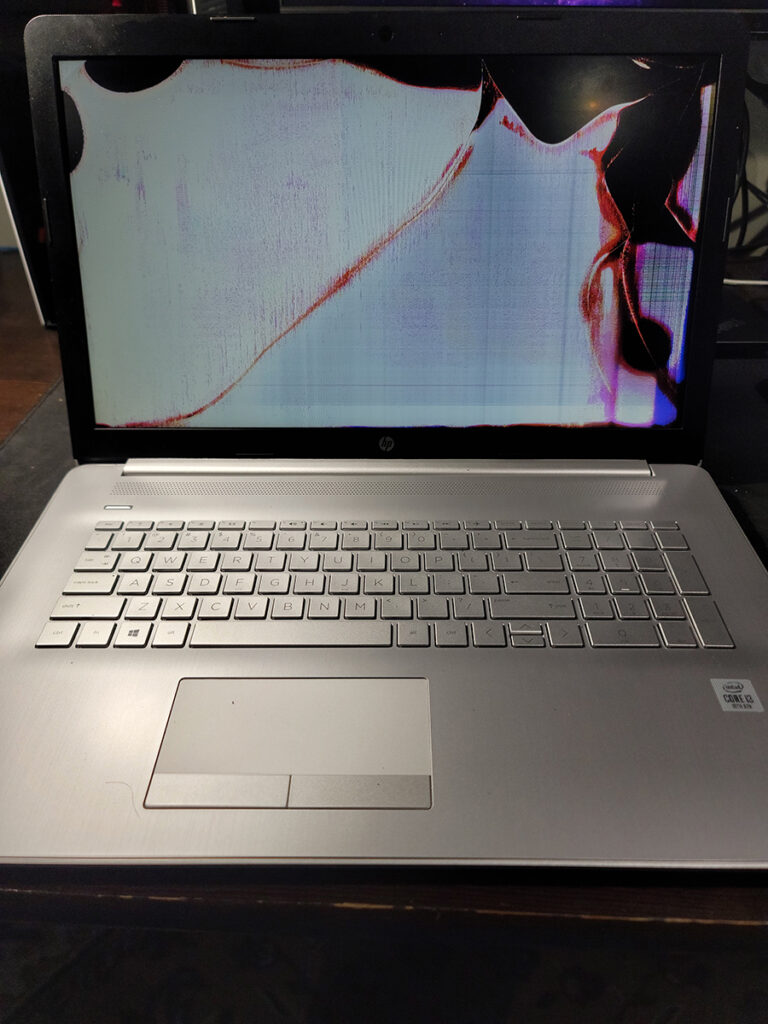

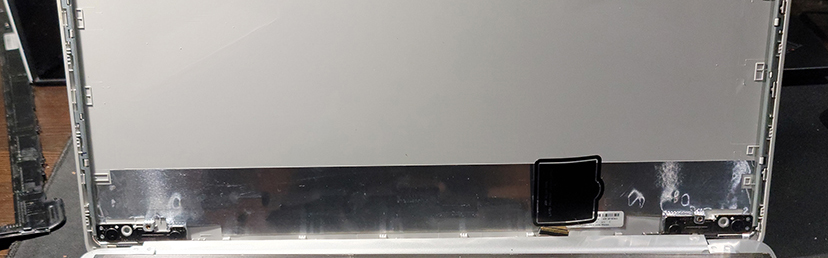

Over the past few years I’ve accepted that if a screen replacement doesn’t require a tear-down of the entire laptop chassis, then it just requires a tear-down of the entire top portion of a laptop chassis.

The HP 17-by3063st has changed my mind.

I won’t be discussing this laptop in too much detail here, those who’ve already heard my spiel on modern laptop make/models know how much I abhor consumer models over their business tier counterparts.

For now, let’s look into why it was surprisingly easy to change the broken LCD display panel on this laptop.

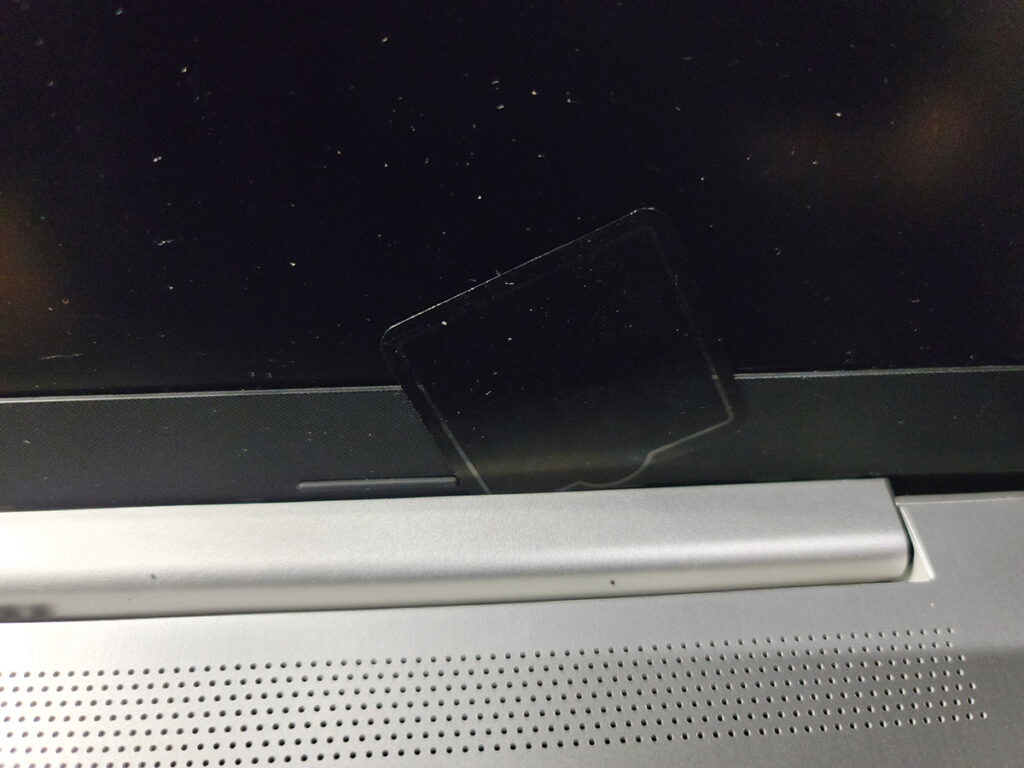

The front display bezel is held in place to the rest of the top chassis with plastic clips. They’re engineered in such a way that levering the bezel by prying it upwards from within the frame releases the clips. To do so I typically use a plastic spacer, but guitar picks work great and can often be found in bulk on eBay for pretty cheap.

The challenging part came from removing the lower half of the bezel which was behind a plastic hinge cover.

A lot of tear-down documentation for <17 models involved a tear-down of the lower chassis to release the hinge from the frame so that the top assembly can be removed, and along with it this hinge cover.

However, this too is held together by clips! What are the odds.

Similar to the bezel, you can insert a plastic spacer between the hinge and the front display hinge, prying the hinge cover in the direction the display is facing.

Two things to note;

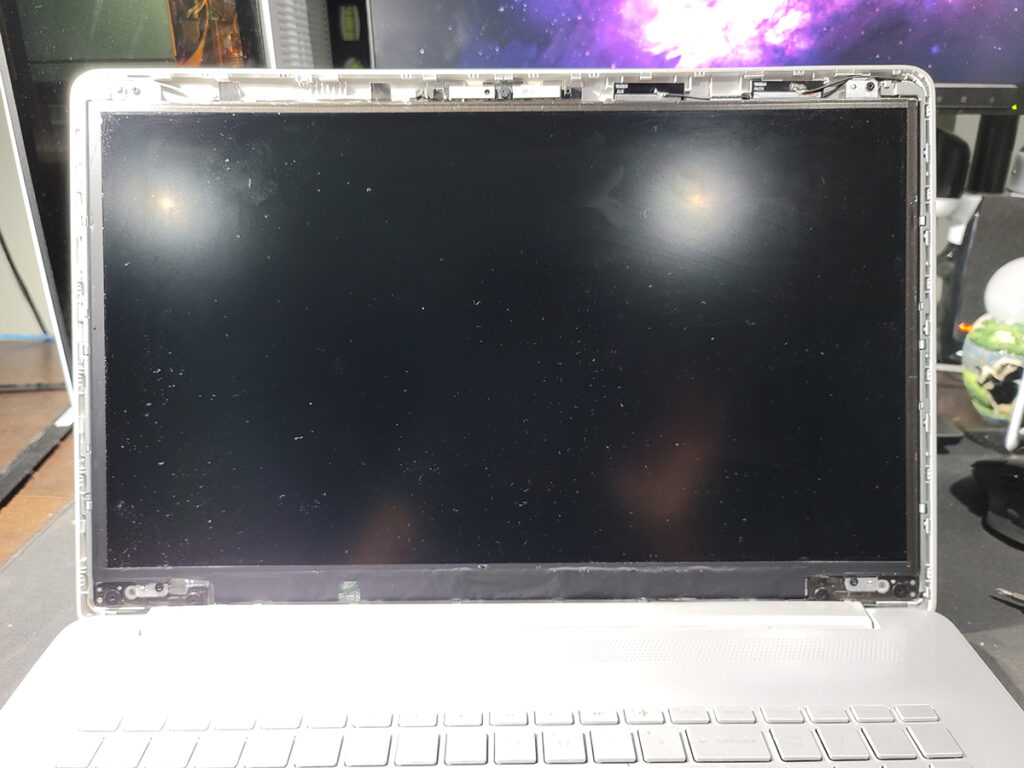

Once off, you can remove the two screws at the top and two at the bottom, ignoring the large flat-head hinge screws.

The display panel will then freely drop off, with the ribbon cable plugged into the lower right portion.

Holding the ribbon cable in place with my plastic spacer, I got my replacement panel, inserted the ribbon cable, and re-assembled the HP 17, performing everything above but in reverse.

Re-seating the bezel and hinge cover wasn’t too difficult, I’d just recommend you start with ensuring all sides of the bezel are inserted and sit flush with the rest of the front assembly, and that there are no gaps between the display and bezel.

All in all, wasn’t too difficult of a replacement.

To the owner of this laptop, I hope you find this useful.

Nothing much to say here, didn’t feel justified spending $10+ just for some plastic caps to protect my telescope from collecting dust and my eyepieces from scratching.

STL (for 3D printing)

IPT (Autodesk Inventor part file)

STL (for 3D printing)

IPT (Autodesk Inventor part file)

Not long ago I had the privilege of meeting Speo, creator of MagicDSC and overall astronomy hobbyist/fanatic, in-person. Thanks again for the crash course on sky watching – I look forward to fiddling with your project more while embracing the hidden beauty of the night sky.

Suffice to say, I’m now the owner of a 6″ Dobsonian SkyWatcher.

I’ve been fascinated with the night sky for the longest time considering the amount of time I spend awake during it from my abysmal sleep schedule.

I thought it’s about time I tapped into a proper tool to observe its many mysteries, and here we go.

Moving forward, I’ll be documenting my journey with this cool piece of tech under #astronomy – including the following on how I improved a cheap red dot viewfinder with an old ball point pen.

While super useful and affordable, I noticed it had quite a bit of wobble in both the X and Y.

You can easily fix this using a few small spacers, but I didn’t want to have to disassemble it again and remove a spacer if I need to re-align it.

For that reason, I ended up stealing the spring from a non-functional ball point pen and cutting it into small segments that I put between the X and Y knobs and the viewfinder frame. After doing that, the wobble was pretty much non-existent.

You can also re-position the spring between the head of the screw and viewfinder body assuming you find yourself calibrating -X/-Y more than +X/+Y.

I can now release tension on each axis without having to adjust how many spacers I use to align it.

If you haven’t listened to the Liquicity Drum & Bass Yearmix 2021 which was mixed by Maduk, you don’t know what you’re missing.

Together, we went over some basic Kubernetes concepts, API resources, and the problems they solved relative to non-cloud native architecture.

As part of this workshop I created some simple K8s cheat-sheets/material, along with a whole new Kubernetes cluster which I exposed as part of a hands-on lab.

Participants, which included high school/post-secondary students, found the workshop pretty cool. I’ve heard feedback post-workshop that their friends thought they were hacking as they used kubectl.

Kids, kubectl responsibly.

I also noted feedback that more detail in certain topics would’ve been great, which I would’ve definitely wanted. I barely managed to get through everything I wanted to in 60 minutes 🙁

The primary goal was to explore common questions/problems one hosting an application may encounter, and how Kubernetes can address them.

All in all, it was a great experience that I look forward to doing more often – not particular to Kubernetes, but cloud native architecture in general. As a cloud engineer, I’ve learned the hard way that this is anything but straight-forward, and I hope others can learn from my struggles as they embark on their journeys in this space.

Workshop aside, I wanted to chat a little about the lab environment I created since I got questions post-lab from other Kubernetes aficionados.

I’ve been running Tanzu Community Edition on my home lab since its early days, both as a passion project having contributed to it, and because it integrates well with my home vSphere lab. As such, I already had a management cluster serving as my ‘cluster operator’, which I use to create/destroy clusters on the fly. This was no different, with the exception of creating a user fit for this public lab.

I should probably preface this by saying that this is NOT something I would encourage for any kind of persistent environment.

While this might be less dangerous if it was behind a VPN, the cluster we’re about to create has the risk of leaking important vSphere configuration data, along with the credentials of the user that cluster resources will be created under. For example, if a user can fetch vsphere-config-secret from kube-system namespace, consider your vSphere environment compromised.

This process assumes the following:

VSPHERE_CONTROL_PLANE_ENDPOINT resolves to some internal IP that’ll be used for your workload cluster API, and resolves to your public IP externallyStep 1. Perform the following command using your cluster config on your Tanzu bootstrap host.

Will also be the longest step depending on your hardware.

tanzu cluster create open --file ./open.k8s.alvinr.ca -v 9TOHacks Tanzu workload cluster config

AVI_CA_DATA_B64: ""

AVI_CLOUD_NAME: ""

AVI_CONTROL_PLANE_HA_PROVIDER: ""

AVI_CONTROLLER: ""

AVI_DATA_NETWORK: ""

AVI_DATA_NETWORK_CIDR: ""

AVI_ENABLE: "false"

AVI_LABELS: ""

AVI_MANAGEMENT_CLUSTER_VIP_NETWORK_CIDR: ""

AVI_MANAGEMENT_CLUSTER_VIP_NETWORK_NAME: ""

AVI_PASSWORD: ""

AVI_SERVICE_ENGINE_GROUP: ""

AVI_USERNAME: ""

CLUSTER_CIDR: 100.96.0.0/11

CLUSTER_NAME: open

CLUSTER_PLAN: dev

CONTROL_PLANE_MACHINE_COUNT: "3"

ENABLE_AUDIT_LOGGING: "false"

ENABLE_CEIP_PARTICIPATION: "false"

ENABLE_MHC: "true"

IDENTITY_MANAGEMENT_TYPE: none

INFRASTRUCTURE_PROVIDER: vsphere

LDAP_BIND_DN: ""

LDAP_BIND_PASSWORD: ""

LDAP_GROUP_SEARCH_BASE_DN: ""

LDAP_GROUP_SEARCH_FILTER: ""

LDAP_GROUP_SEARCH_GROUP_ATTRIBUTE: ""

LDAP_GROUP_SEARCH_NAME_ATTRIBUTE: cn

LDAP_GROUP_SEARCH_USER_ATTRIBUTE: DN

LDAP_HOST: ""

LDAP_ROOT_CA_DATA_B64: ""

LDAP_USER_SEARCH_BASE_DN: ""

LDAP_USER_SEARCH_FILTER: ""

LDAP_USER_SEARCH_NAME_ATTRIBUTE: ""

LDAP_USER_SEARCH_USERNAME: userPrincipalName

OIDC_IDENTITY_PROVIDER_CLIENT_ID: ""

OIDC_IDENTITY_PROVIDER_CLIENT_SECRET: ""

OIDC_IDENTITY_PROVIDER_GROUPS_CLAIM: ""

OIDC_IDENTITY_PROVIDER_ISSUER_URL: ""

OIDC_IDENTITY_PROVIDER_NAME: ""

OIDC_IDENTITY_PROVIDER_SCOPES: ""

OIDC_IDENTITY_PROVIDER_USERNAME_CLAIM: ""

OS_ARCH: amd64

OS_NAME: photon

OS_VERSION: "3"

SERVICE_CIDR: 100.64.0.0/13

TKG_HTTP_PROXY_ENABLED: "false"

VSPHERE_CONTROL_PLANE_DISK_GIB: "40"

VSPHERE_CONTROL_PLANE_ENDPOINT: k8s.alvinr.ca

VSPHERE_CONTROL_PLANE_MEM_MIB: "16384"

VSPHERE_CONTROL_PLANE_NUM_CPUS: "4"

VSPHERE_DATACENTER: <>

VSPHERE_DATASTORE: <>

VSPHERE_FOLDER: <>

VSPHERE_NETWORK: <>

VSPHERE_PASSWORD: <>

VSPHERE_RESOURCE_POOL: <>

VSPHERE_SERVER: <>

VSPHERE_SSH_AUTHORIZED_KEY: |

ssh-rsa <>

VSPHERE_TLS_THUMBPRINT: <>

VSPHERE_USERNAME: <>

VSPHERE_WORKER_DISK_GIB: "40"

VSPHERE_WORKER_MEM_MIB: "8192"

VSPHERE_WORKER_NUM_CPUS: "4"

WORKER_MACHINE_COUNT: "3"* items denoted with <> have been redacted

Step 2. Retrieve admin credential, you might want this later (plus, we’ll steal certificate authority data from this later).

tanzu cluster kubeconfig get open --admin --export-file admin-config-openStep 3. Generate key and certificate signing request (CSR).

Here, we’ll prepare to create a native K8s credential.

Pay attention to the org element, k8s.alvinr.ca, this will be our subject to rolebind later.

openssl genrsa -out tohacks.key 2048

openssl req -new -key tohacks.key -out tohacks.csr -subj "/CN=tohacks/O=tohacks/O=k8s.alvinr.ca"Step 4. Open a shell session to one of your control plane nodes.

Feel free to do so however you want, my bootstrapping host had kubectl node-shell from another project so I ended up using this.

Copy both the key and CSR into this node.

Step 5. Sign the CSR.

My lab life was for the duration of TOHacks 2022 Hype Week, so 7 days validity was enough.

openssl x509 -req -CA /etc/kubernetes/pki/ca.crt -CAkey /etc/kubernetes/pki/ca.key -CAcreateserial -days 7 -in tohacks.csr -out tohacks.crtStep 6. Encode key and cert in base64, we’ll use this later to create our kubeconfig.

Copy these two back to your host.

cat tohacks.key | base64 | tr -d '\n' > tohacks.b64.key

cat tohacks.crt | base64 | tr -d '\n' > tohacks.b64.crtStep 7. Build kubeconfig manifest.

Here’s an example built for this lab, this is what I distributed to my participants.

We didn’t bother going over importing this credential to their kubeconfig path, we just provided it manually to every command via the --KUBECONFIG flag.

TOHacks kubeconfig credential

---

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: <ADMIN_KUBECONFIG_STEP_2>

server: https://k8s.alvinr.ca:6443

name: public

contexts:

- context:

cluster: public

namespace: default

user: tohacks

name: tohacks

current-context: tohacks

kind: Config

preferences: {}

users:

- name: tohacks

user:

client-certificate-data: <tohacks.b64.crt_STEP_6>

client-key-data: <tohacks.b64.key_STEP_6>Step 8. Create a Role.

Here is where we define what our lab participants can do. In this case, I created a namespace-scoped (default) granting them a pseudo reader access (they can only use get/watch/list API).

TOHacks read-only participant role

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

namespace: default

name: tohacks

rules:

- apiGroups: ["*"]

resources: ["*"]

verbs: ["get", "watch", "list"]Step 9. Create a RoleBinding.

Here is where we define our subject, in this case the org used in the signed cert file from the CSR generated in Step 3.

TOHacks read-only participant role binding

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: tohacks

namespace: default

subjects:

- kind: Group

name: k8s.alvinr.ca

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: Role

name: tohacks

apiGroup: rbac.authorization.k8s.ioStep 10. Deploy roles.

kubectl apply -f tohacks-role.yaml

kubectl apply -f tohacks-rolebinding.yamlStep 11. Expose K8s API (port 6443).

Finally, the part I disliked the most, exposing my cluster API for the host IP defined in Step 1., VSPHERE_CONTROL_PLANE_ENDPOINT.

I’m sure there’s lots of better ways to do this, another one that occurred to me was sitting it behind some reverse proxy like nginx since K8s API traffic is still standard HTTP.

When TOHacks 2022 concluded, the lab was swiftly sent to the void it came from.

tanzu cluster delete open